About

This is the documentation of an advanced practical by Richard Schönpflug and Philipp Rettig under supervision of Dr. Susanne Krömker. Our work is based off of Pheakdey Nguonphan's Thesis called "Computer Modeling, Analysis and Visualization of Angkor Wat Style Temples in Cambodia" [2], where a 3D model of the main temple of Angkor Wat has been created for rendering purposes. Our goal is to be enable the exploration of this model in virtual reality by adding appropriate controls, minimizing nauseating elements and implementing additional features to navigate and experience the virtual world. We used the Oculus Rift VR goggles and the Unity game engine for this project.

Development

Model

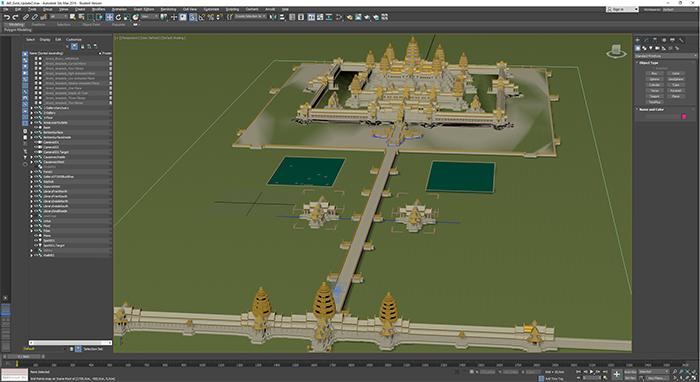

As first step we opened the provided .max project file in Autodesk 3ds Max to export it as a unity compatible model. We noticed some missing textures, which were not included in the provided archive, but only linked locally on the creators laptop. This did not concern us, because we planned to redo most of the textures anyways. As export format we chose FBX which is a format developed by Autodesk. FBX is also compatible with Unity and was the most straight-forward exporting process. While we tried to use the open Wavefront OBJ format, we faced various geometric errors in the model after exporting, so we stuck with FBX.

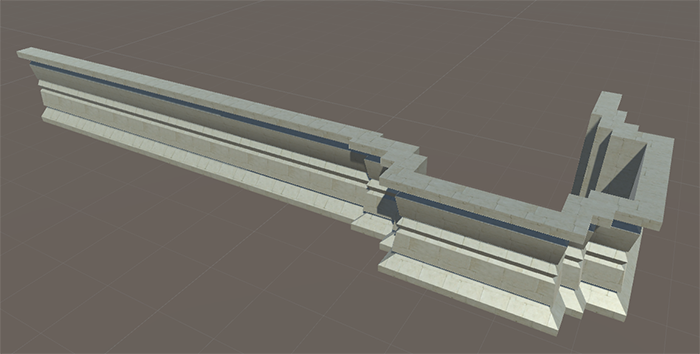

Due to the generation of the model via mathematical formulae, some of the objects had an exact overlap, leading to z-fighting issues. These z-fighting issues are not visible when rendering a single high-definition image in 3ds max like the original work did, but are very much visible while in an interactive environment. We started the work on the model by removing most of the duplicated environment and completely redesigning others. The generated model is not divided in small regular chunks like a manually crafted model would be. For this reason some rather unusual pieces of geometry exist in the model, some examples pictured below.

Because of these irregular formed pieces of environment, we could not eliminate z-fighting and other problems like small gaps or floating objects in the environment. Doing that would have required remodelling of vast amounts of objects and scenery and would have not been time feasible. We tried our best to mask the remaining errors and the remaining errors are only visible if they are very closely inspected or already known to the observer.

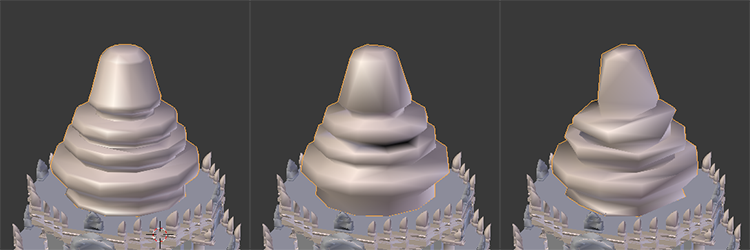

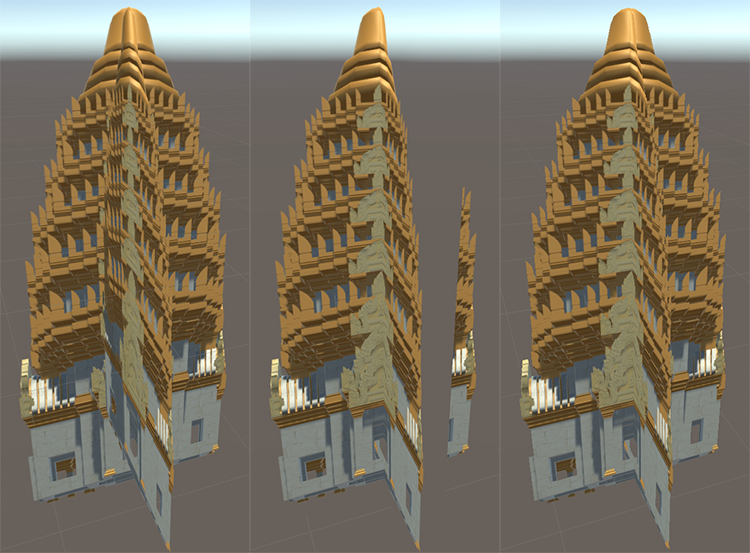

The procedurally generated nature of the model also lead to another challenge in the development process. Due to the high resolution of the objects with sometimes thousands of vertices in a single piece of geometry, we faced a very high number of draw calls when viewing the whole angkor temple at once. This lead to framerate drops below 60 FPS. Framerates below 60 and inconsistent framerates are the biggest cause of nausea in VR. Therefore we had to somehow reduce the number of drawcalls to the graphics card. At first we tried to simplify the geometry using various tools. We tried the simplification options of 3ds Max, Blender and some custom unity scripts to reduce the number of vertices in the model. Using Blender was the most promising approach, but eventually also failed to produce usable results. The algorithms used to simplify the geometry only work as intended on regular and base objects like spheres and rectangles. The extremely irregular composition of the model only allowed minor reductions of complexity before revealing holes and artifacts in the model as shown below.

This rather regular example already shows the challenge of reducing the geometry of a model which features far more complicate areas as well. Even the already rather strong simplification in the middle case which removes around 70% of the vertices did not suffice to achieve usable framerates for the model while already destroying its integrity.

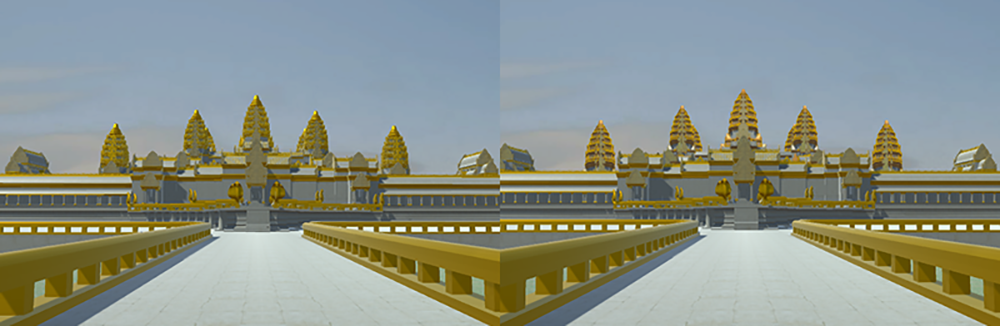

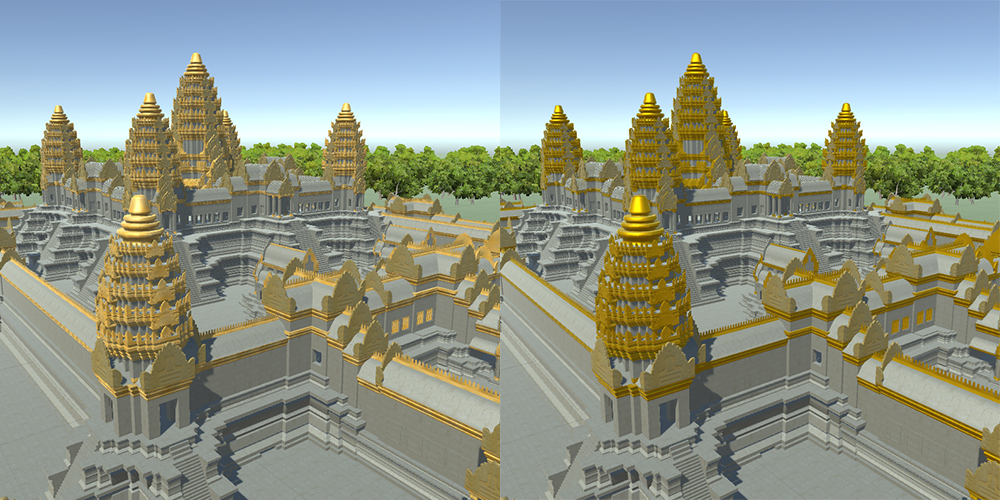

For this reason we used billboarding to massively reduce the number of draw calls to the video card. We took texture snaps of the 5 towers of the temple and of the surrounding walls. While standing outside of the temple, the walls and towers are replaced by the billboards. This is not noticable without a direct comparison with the real model. The full 3D sculpted model is still loaded in the background to allow for a quick switch from billboard to 3D environment when entering the temple. This makes exploration of the outside and inside of the temple possible at steady framerates of 60 FPS and above.

Using sprites as billboards to replace the 3D geometry required some changes to the sprite vertex and fragment shader. Because we used two sprites which are placed into each other at a 90° angle, some overlapping and depth buffer issues occured. As shown in the image below the unmodified sprite shader leads to depth buffer issues. Because both sprites are on the same position, one is displayed on top of the other depending on the viewing angle. This is very obvious and distracting during the gameplay. The activation of depth testing in the sprite vertex shader of Unity by adding the command "ZWrite Off" solves this issue. Now another issue occures. Because sprites allow for transparent parts in the texture but are normally not used with depth testing, the sprites now occlude objects in the background even if they are actually transparent parts of the texture. This can be fixed in the sprites fragment shader, by clipping the alpha channel to a predefined value. Values below this threshold are simply not included in the texture and can no longer occlude background objects. See file "Sprites-DefaultSingle.shader" for the vertex shader and "UnitySpritesAlpha.cginc" for the fragment shader.

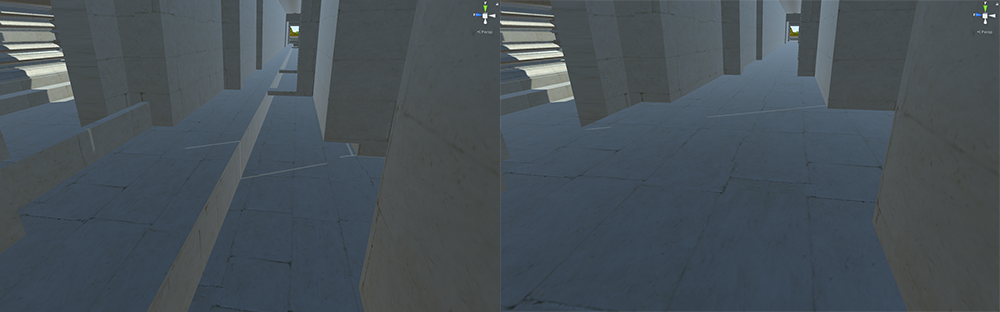

Another big change to the model was ensuring, that the complete temple is accessible while walking through it. This includes various changes and additions. First of all we needed to add floors into almost all of the hallways and rooms, because the model was originally designed to be rendered from the outside and only partially from the inside, so not every room was completely modeled. Second we added over 80 stairways to the model to make the different floors and rooms actually accessible by foot. As last step, the complete geometry was provided with mesh colliders to prevent clipping through the ground and walls.

We also replaced all of the missing textures and some of the existing textures to improve overall looks of the model.

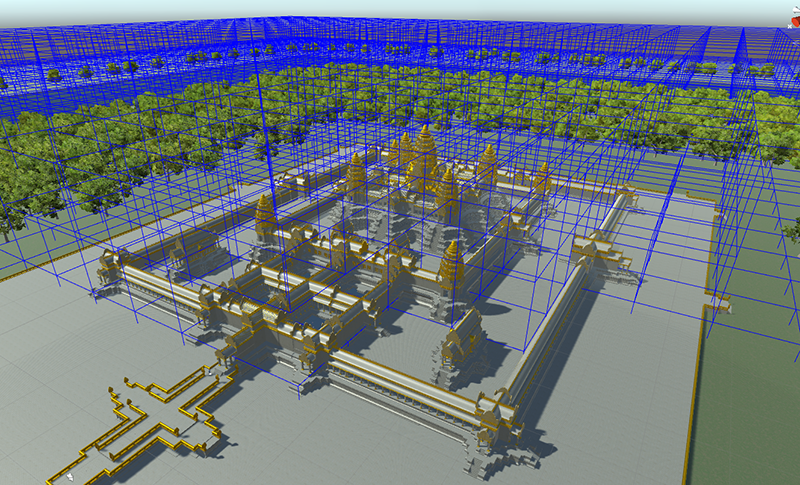

Our last work on the model was the computation of occlusion culling for the whole scene. This was done using the included occlusion culling method in Unity.

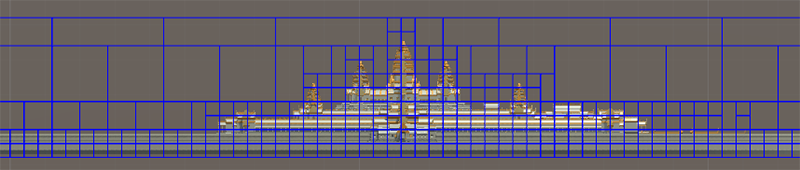

Occlusion culling in progress. Larger volumes in empty spaces and more refined cull boxes for more complex geometry are applied automatically by Unity. Pictured below is the finished culling in 2D.

Our various changes to the model allowed us to have a completely accessible, nicely looking model in virtual reality. The simulation runs smoothly and never dips below 60 FPS while using the occulus headset or a high resolution WQHD monitor. The performance was tested on systems featuring an AMD Ryzen R5 1600x processor with a Nvidia GTX970 graphics card and on a system featuring an Intel i7-4790k processor and an AMD Radeon R9 390 graphics card with 16GB RAM each.

Controls

For debugging purposes we implemented a first person controller to be able to move the camera via keyboard and mouse. Unity provides the FirstPersonController asset for this use-case, which offers all needed features to control the player with the WASD-Keys and rotate the camera with the mouse. This is easily extendable to allow for headtracking using the Oculus by ticking the "Virtual Reality Supported" checkbox in the editor settings. Headtracking and mouse input now interfere with the camera rotation though, so we added a boolean flag to disable mouse rotation in the first person controller script, making it easy to toggle VR-mode.

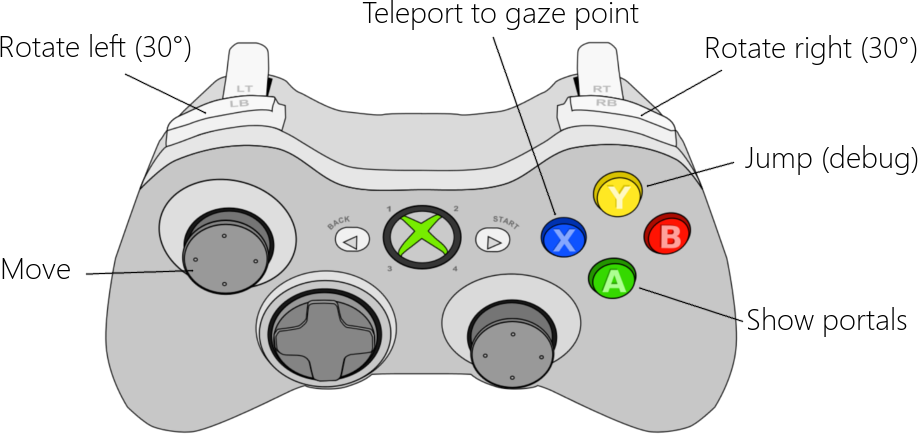

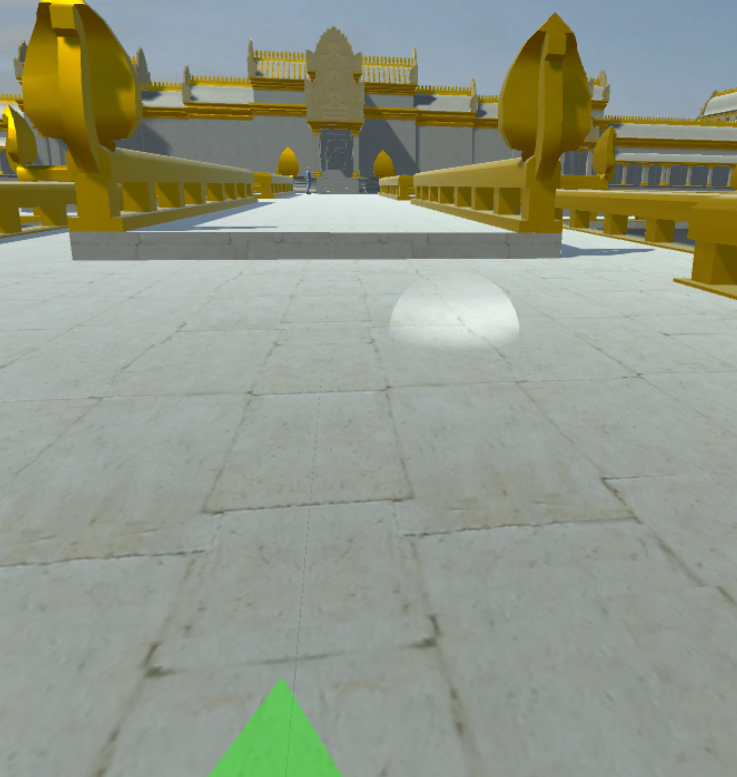

With the VR-headset on, using the keyboard is very inconvenient, so we switched to an XBox-Controller. Character movement - again - automatically translates to the gamepad. Just moving the camera forward, backward, left and right does not suffice to explore the model though, so we added rotation by a fixed angle (30°). If "forward" was defined as the direction the user is currently looking at, moving would cause nausea. Also, rotating "smoothly" by using the right analog stick, would be way more nauseating than using the fixed angle. This makes it easy to lose track of the current forward direction, so we projected an arrow on the floor in front of the camera to indicate it.

To interact with elements in the virtual world, we added a gazing system, where the player looks at an object long enough to activate it. Looking at an interactive object spawns a red circle in front of the camera which fills up. When the circle is full, the object will be triggered. For this to work, the user has to be close to the object with an unobstructed view on it.

Moving in virtual reality is often nauseating as well, so it is common practice to implement some kind of teleportation [1]. We added two methods to help navigating through the world: portals to special places of interest and teleportation by gazing to the desired destination. The portals will be spawned by pressing and holding a button on the gamepad. By looking long enough at one of the destinations, the user will be teleported.

The second kind of teleportation can also be activated by pressing and holding the respective button. A sphere will appear, indicating the position the user can teleport to. The location of the sphere corresponds to the viewing direction of the user. By releasing the button, the teleportation process will begin. Checking the targeted surface's normal vector makes it possible to restrict the target to flat planes where the user can be teleported to safely.

Abruptly changing the position of the camera also has a nauseating and irritating effect, which we eliminated by simulating blinking. When the teleportation process is initiated, black bars will appear, imitating closing eyelids. As soon as the eyelids are completely closed, the user will be teleported. When the process is finished, the eyelids will open again, leaving the user at the desired position.

Extras

The originally used render optimized trees do not work in real time environments. Thus we repleaced them with Unity SpeedTrees, to ensure a more realistic and dense forrest around the temple. The SpeedTrees have built in level of detail stepping and billboards which ensures good performance.

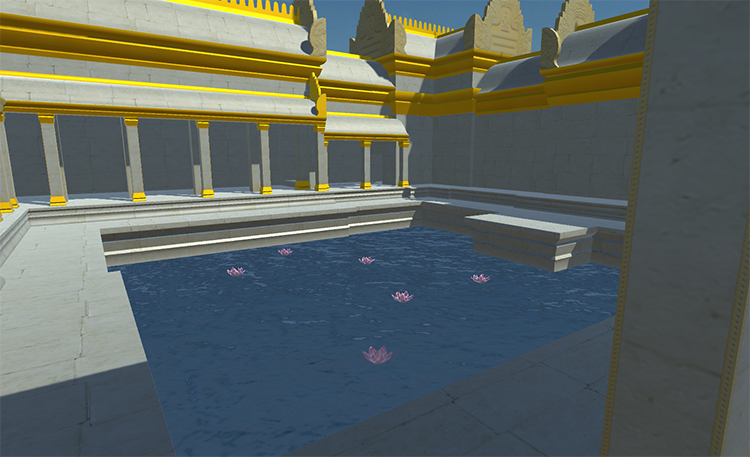

We also filled the static ponds with animated pools of water including sea roses which are floating around on the waves.

Furthermore, we added a Skybox to help disguising the borders of the map, which also results in a more relaistic simulation.

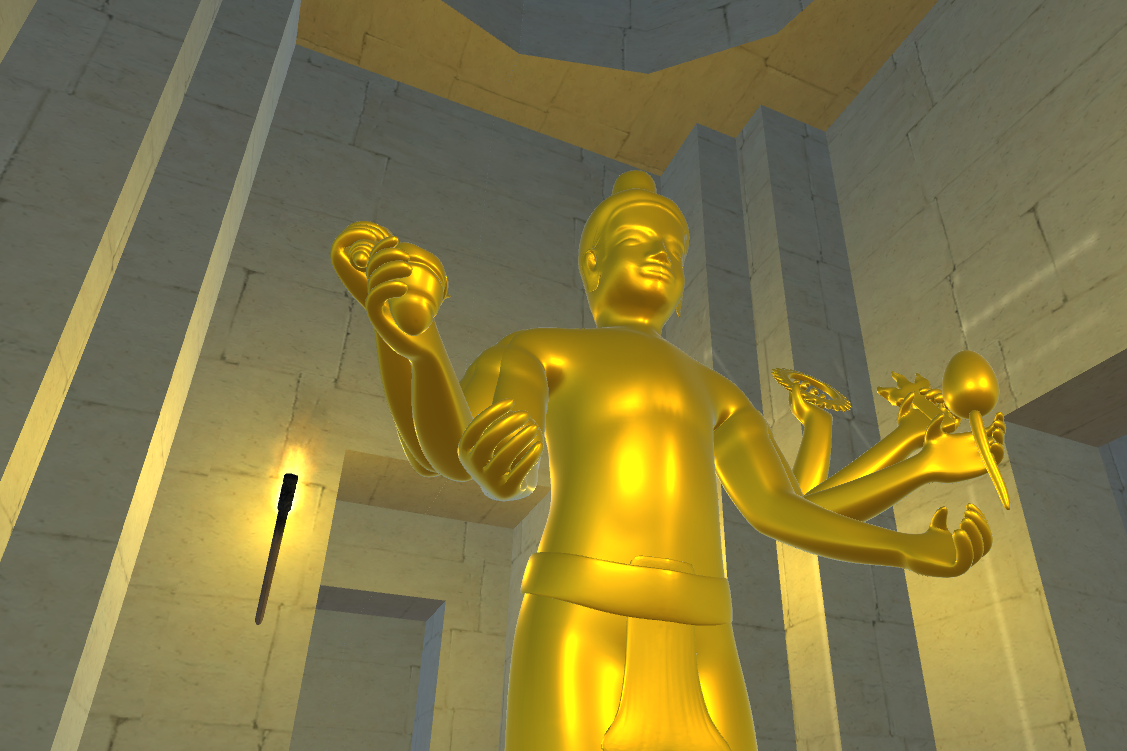

As a central point of the model, we wanted the Vishnu statue to draw attention so we added a the improved gold texture to it and illuminated the room with animated torches.

To help discovering the temple, a guide has been added to the entrance of the temple. He greets when the user approaches him and can be activated with the gaze mechanism. When activated, he will start walking through the temple, giving information on what can be seen via text-to-speech. The movement is controlled by predefined waypoints which he traverses using the unity NavMesh pathfinding feature. Walking and idle animations were taken from Unity's motion capturing set.

Future Work

The current scene can now easily be discovered by the user, but has still room left for improvement. Starting with the forest, additional types of trees could be added to gain some variety. Further realism would be achieved adding foliage and rocks to the model. All-in-all, most of the textures are still pretty basic, so higher resolution textures which match the corresponding surfaces could improve the visuals drastically. Also, animated elements like particle effects always make the world appear more lively which is an important aspect for virtual reality.In its current state, the guide is pretty basic as well. It serves more as a proof of concept than a real tour around the temple. Improving the route and information given via text-to-speech would make for an interesting and helpful element in the simulation.

Finally, we used the Oculus Rift Development Kit 2, which is very outdated hardware. Switching to newer solutions like the consumer version of the Oculus Rift or the HTC Vive would extremely improve the project without any changes of software.

Download

Presentation

Contact

References

[1] Oculus VR. Introduction to Best Practices. 2017 Accessed on June 13 at https://developer.oculus.com/design/latest/concepts/bp_intro/.

[2] Pheakdey Nguonphan. Computer Modeling, Analysis and Visualization of Angkor Wat Style Temples in Cambodia. 2009.